The Murdoch Press has rightly kept its pressure up on Google, with a cover story in The Times, ‘Adverts fund paedophile habits’ on November 24 (the online version, behind a paywall, is here).

Say what you will about its proprietor, but Murdochs have been happy to go after the misdeeds of Google: the earlier one I’ve cited on this blog was when Google was found to have hacked Iphones in 2012.

This time, YouTube is under fire for videos of children that were attracting comments from pædophiles, forcing the company to switch off comments, but it’s already lost advertising from Mars, Cadbury, Adidas, Deutsche Bank, Diageo, HP, and Lidl.

Buzzfeed has discovered even more disturbing content involving children, including from accounts that have earned YouTube’s verified symbol. Be prepared if you choose to click through: even the descriptions of the images are deeply unsettling.

Buzzfeed noted:

On Tuesday afternoon, BuzzFeed News contacted YouTube regarding a number of verified accounts — each with millions of subscribers — with hundreds of disturbing videos showing children in distress. As of Wednesday morning, all the videos provided by BuzzFeed News, as well as the accounts, were suspended for violating YouTube’s rules …

Many of the offending channels were even verified by YouTube — a process that the company says was done automatically as recently as 2016 …

Before YouTube removed them, these live-action child exploitation videos were rampant and easy to find. What’s more, they were allegedly on YouTube’s radar: Matan Uziel — a producer and activist who leads Real Women, Real Stories (a platform for women to recount personal stories of trauma, including rape, sexual assault, and sex trafficking) and who provided BuzzFeed News with more than 20 examples of such videos — told BuzzFeed News that he tried multiple times to bring the videos to YouTube’s attention and that no substantive action was taken.

On September 22, Uziel sent an email to YouTube CEO Susan Wojcicki and three other Google employees (as well as FBI agents) expressing his concern about “tens of thousands of videos available on YouTube that we know are crafted to serve as eye candy for perverted, creepy adults, online predators to indulge in their child fantasies.” According to the email, which was reviewed by BuzzFeed News, Uziel included multiple screenshots of disturbing videos. Uziel also told BuzzFeed News he addressed the concerns about the videos early this fall in a Google Hangout with two Google communications staffers from the United Kingdom, and that Google expressed desire to address the situation. A YouTube spokesperson said that the company has no record of the September 22nd email but told BuzzFeed News that Uziel did email on September 13th with screenshots of offending videos. The company says it removed every video escalated by Uziel.

I’m believe Uziel more, and I even believe that the 20 examples he provided to Buzzfeed were among the ones he escalated to Google. Unless he discovered them since, why would he show them to Buzzfeed while claiming that Google had been ineffective? Both The Times and Buzzfeed claim some of these abusive videos have each netted millions of views—and substantial sums for their creators.

And people wonder why we don’t continue to operate a video channel there, instead opting for Vimeo (for my personal account) and Dailymotion (for Lucire).

I don’t claim either is immune from this, but they seem to want to deal with harmful content more readily, principally because they’re not subject to the culture at Google and at Facebook, which appears to be: do nothing till you get into trouble publicly.

LaQuisha St Redfern shared this link with me from The New York Times from a former Facebook employee, Sandy Parakilos, which can be summarized:

Facebook’s chief operating officer, Sheryl Sandberg, mentioned in an October interview with Axios that one of the ways the company uncovered Russian propaganda ads was by identifying that they had been purchased in rubles. Given how easy this was, it seems clear the discovery could have come much sooner than it did — a year after the election. But apparently Facebook took the same approach to this investigation as the one I observed during my tenure: react only when the press or regulators make something an issue, and avoid any changes that would hurt the business of collecting and selling data.

This behaviour is completely in line with my own experience with the two firms. Google, long-time readers may recall, libelled our websites for a week in 2013 by claiming they had malware. It was alleged that there were only two people overseeing the malware warnings, something which has since been disproved by a colleague of mine who was in Google’s employ at the time.

However, The Times alleges that YouTube monitoring of reported videos is in the hands of ‘just three unpaid volunteers’, hence they remained online.

I have some sympathy for YouTube given the volume of video that’s uploaded every second, making the site impossible to police by humans.

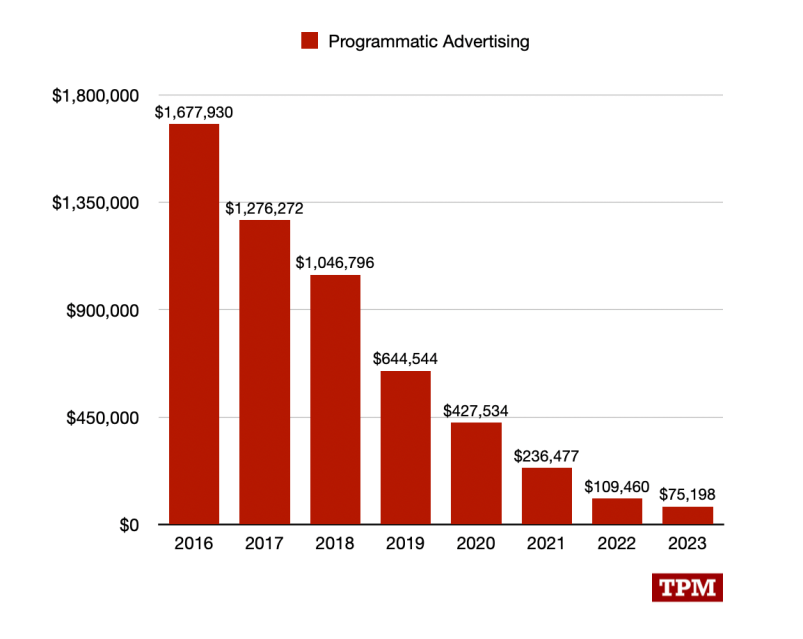

However, given how much the company earns off people—their advertising arm rakes in tens of thousands of millions a year—three unpaid volunteers is grossly negligent. If certain states’ attorneys-general had more balls, like the EU does, this could be something to investigate.

There’s also not much excuse that a company with Google’s resources didn’t put more people on the job to create algorithms to get rid of this content.

Once rid, Google needs to ensure that owners who are caught up with false positives have a real appeals’ process—not the dismal, ineffective one they had in place for Blogger in the late 2000s that, again, was only remedied on a case-by-case basis after a Reuter journalist had his blog removed. That can be done with human employees who can take an impartial look at things—not ones who are brainwashed into thinking that Google’s bots can never err, which is a viewpoint that many of Google’s forum volunteers possess, and are consequently blinded.

Facebook’s inability to shut down fake accounts—I have alerted them to an ‘epidemic’ in 2014—has been dealt with elsewhere, and now it’s biting them in the wake of President Trump’s election.

These businesses, which pay little tax, are clearly abusing their privilege. Since the mid-2000s, Google hasn’t been what I would consider a responsible corporate citizen, and I don’t think Facebook has ever been.

2 thoughts on “YouTube under fire for child exploitation videos—with ‘three unpaid volunteers’ monitoring reports”